Algorithms (Al, for short) tell your computer what to do. They solve problems—like shifting into low power mode when your battery charge is less than 20%; perform tasks—like downloading new mail; and make decisions—like muting the GPS voice directions so you can take a phone call in your car. Until recently, most algorithmic instructions involved machine communications. Algorithms monitor the computer’s internal conditions (e.g., battery power). When a certain threshold is reached (e.g., less than 20% battery remaining), Al communicates to the computer: shift to low power mode; and to the user: plug into external power.

Humans take their computers for granted. We delegate mundane tasks to our devices (like finding directions to a restaurant) and we don’t care how the technology accomplishes them. How can your smartphone dial your friend’s phone number—a number you can’t remember? Al is better at information retrieval than you are. Your friendly algorithm finds the phone number in your contacts list and commands: input [phone number] in [dial-out program] and [execute]. Fast and easy!

With the expansion of social media and advancements in artificial intelligence, the situation has changed. Algorithms do more; they are more powerful. And humans are oblivious. On sites like Facebook, YouTube, and TikTok, algorithms have become the silent partner in human relationships. You think you’re having a two-way chat online . . . but you’re actually in a three-some. Al is the silent wingman; he sets up the dates, hires the band, and supplies the drinks. Al’s job is to keep people at the party as long as possible. He listens to every conversation.

This week’s essay is a wake-up call. Inattention and complacency have consequences. Luckily, humans have agency! We can resist manipulation by becoming more aware and knowledgeable. And that knowledge exists. Princeton social scientist William J. Brady and his colleagues have studied the psychological effects of social media consumption. Their analysis of algorithmic-mediated communications helps us understand what’s going on—with the platforms and the people.

In what follows, I walk you through Dr. Brady et al.’s research explaining how online interactions shape what we know and how we feel. Their research identifies positive aspects of online communities you can benefit from—and negative aspects you can defend against.

Social Media and Social Learning

Just as children observe and copy the behavior of those closest to them, individuals connect online by learning social media norms. Users pick up internet slang (tl;dr and ¯\_(ツ)_/¯), learn how to post and comment, add to threads, connect with friends (and friends-of-friends), and so on. But there’s a difference between living in society and living online.

Human communication patterns evolved over hundreds of thousands of years. By contrast, digital conversations on social media platforms have a twenty-year history.

Humans survived and prospered over millennia because of social learning. They observed other humans and copied optimal behaviors (e.g., emulating skillful hunters); they inferred others’ goals and intentions (e.g., looking for cues indicating whether someone was an enemy or a friend); and they noticed that behavior was punished (e.g., when someone stole food) or praised (e.g., when someone found a clean water source and told others). . . . Survival methods and coping mechanisms became cognitive patterns embedded in brains and culture.

Social learning in early human society developed through cooperation and problem-solving. Social knowledge was biased toward group survival and sustained communication was key. People paid attention when a high-status group member raised an alarm, signaled imminent danger, and called others to act. Even today, these social cues stimulate the brain's emotional region, eliciting quick—not thoughtful or rational—reactions. Messages are resonant when they include these qualities:

Prestige + In-group + Moralizing + Emotional

Researchers use the acronym, “PRIME” for this information. When human needs were simple, attention to PRIME information was functional. But in complex, multicultural societies, socially biased information can be harmful. Modern systems rely on facts and data for rational calculations. Information must be dispassionate to be useful.

Social media companies make use of the ancient affinity for PRIME messages. Tech companies understand the resonance of such communications. How to connect these motivating messages to receptive users in the audience? Put Al on the job! He’s great at sorting huge amounts of incoming data.

Facebook users upload more than 300 million photos per day and post 510,000 comments per minute, and there are 500 million Twitter posts per day. Users clearly do not have the time or attention to view all of these posts. Content algorithms must select the information we see and decide what kind of information to amplify.

Our friend Al sorts through posts and comments to select and present content that he predicts will be of interest to a particular user. With repeated interactions and more time spent online, Al’s predictions get better and better. Al learns what engages the user and boosts that content. (This explains the millions of cat videos.)

Dr. Brady’s team illustrates how it works:

Person pays attention to content (A) that appeals to their social biases, i.e., PRIME information. Al the Robot pays attention to what Person likes and gives them more of it (C, B). The more choices Person makes, the more Al learns about their preferences (A, C). Al the Robot amplifies this content by showing Person more (and more extreme) versions of the same kind of stuff (B). Over time, PRIME content proliferates on the platform.

Social media algorithms amplify PRIME content because they are designed to capture attention and elicit an emotional reaction from users. Al does what it takes to entertain his (unsuspecting) friends and keep them at the party for as long as possible. Because attentional capture and engagement maximize profits for Al’s boss’s company’s shareholders.

What could go wrong? Well . . .

A Vast Social Experiment

The stated purpose and the actual purpose of the main social media companies are disconnected. For example, here’s Facebook’s mission statement:

Facebook's mission is to give people the power to build community and bring the world closer together.

That’s what they tell the public; it’s called marketing. If you want to know what Meta’s actual purpose is, look at what they do: sell user data to brokers, hawk online advertising, and dispatch lobbyists to prevent government regulation of tech companies. The rapid growth of social media is not from people building autonomous communities online; advances in technology and unfettered profit-seeking drive it.

Tech changes rapidly; societies evolve gradually. We are now in the “Find Out” stage . . . and the unforeseen consequences are piling up. When for-profit tech companies exploit the human affinity for PRIME information, social life is distorted, damaging psyches and communities.

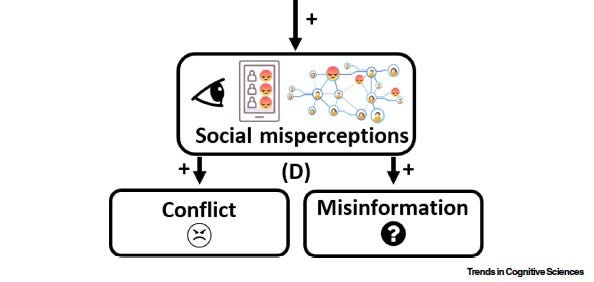

Brady et al.’s model illustrates the effects of algorithmic boosting. Negative consequences occur on two levels: the individual (Person) and social (Network).

Person sees posts that get the most emotional engagement (because Al has boosted these posts into Person’s feed). (E.g., Facebook scores posts based on intensity. Anger = 5, Like = 1). Person incorrectly concludes that negativity is prevalent throughout the network; this misperception (D) stems from the algorithm’s selectivity bias towards PRIME information. Person’s mood is affected negatively and they respond with contentious posts and comments, leading to higher levels of conflict/engagement across the network.

PRIME information is socially biased, by definition. Posts with inaccurate or outrageous information predominate across the network because they receive the most responses; the algorithm further amplifies them. Thus, the network spreads misinformation (or disinformation) (D) much more effectively than factual information.

PRIME info is artificially boosted via Person-Al interactions, moving more negative and outrageous content. However, these angry or demeaning comments are generated by a minority of users. Poor Person! When outrageous utterances are overrepresented in their feed, human Person thinks the whole world is gone to shit.

Balancing Online Engagement

Why are people spending more time online if all this bad stuff happens? 1. It’s addictive. 2. It’s community. The issue seems to be . . . how to emphasize the good stuff (community) and avoid the bad (addiction). Balancing one’s consumption to avoid addiction and enjoy community may be possible for healthy persons. But it doesn’t work at scale.

Brady’s research team concludes that the algorithm is the problem. Our brains are wired for social learning through centuries of problem-solving within human communities. When Al creates a digital environment for human interaction and exploits established communication patterns, a functional misalignment occurs. Communication among persons no longer facilitates cooperation; it promotes conflict and lies. So encouraging people to “take charge” of their social media consumption is pointless. Solutions have to be on a grander scale.

The researchers suggest two fixes: 1) Redesign the algorithm to de-emphasize PRIME content and promote the circulation of more diverse information. (I think this option is unlikely to work: social media companies have no financial incentive to change their business model.) 2) Enact government regulations that require greater transparency. Companies would have to ’fess up and admit how Al operates. Force Meta, Alphabet, X, and ByteDance to come clean to users and give individuals control over their feeds. Federal regulators could require companies to implement content moderation under specific guidelines. (Guidelines would be developed through the standard federal rulemaking procedure that incorporates citizen comments.)

That might work. In the meantime . . . stay alert, moderate your social media consumption, and demand that your representatives pass legislation to regulate the tech giants.

And now, your moment of . . . reunion.

Former NY science teacher promised his 1978 class an eclipse party. He just hosted it.

When he started teaching in 1978, Patrick Moriarty passed out worksheets to his science class, showing the trajectories of upcoming eclipses. Only one was expected to pass near their hometown in Upstate New York, but watching it as a class was going to be difficult — it wouldn’t occur for nearly five decades.

‘Hey, circle that one on April 8, 2024,’ Moriarty recalled telling his students. ‘We’re going to get together on that one.’

And they did.

‘This has got to be the longest homework assignment in history,’ Thompson, now 56, recalled telling Moriarty.

Read the whole article, it’s sweet.

Related Grounded articles

PRIMEd for Propaganda, April 9, 2024

Propaganda, Rebranded, April 2, 2024

Keep scrolling down (below Notes) to reach the comments, share, and like buttons.

Dear Readers, could you please hit the “like” button? It helps improve the visibility of Grounded in search results. Thanks.

Follow me on social media:

Notes:

Dorcas Adisa, Everything you need to know about social media algorithms.

William J. Brady, et al. (2023). “Algorithm-mediated social learning in online networks,” Trends in Cognitive Science, Vol. 27, No. 10, pp. 947-960. (This journal is behind a paywall. Contact me if you want to borrow a copy.)

Health Tech Digital, Why is TikTok so addictive?

Jeremy B. Merrill and Will Oremus, Five points for anger, one for a ‘like’: How Facebook’s formula fostered rage and misinformation.

Office of the Federal Register (United States), The Rulemaking Process (pdf).

Pew Research Center, Using Social Media to Keep in Touch.

Facebook has morphed from an innocent child to a very calculating adult. AI is ubiquitous in our lives. I have tried to convince that "Alexa" and "Siri" are always listening to our conversations...alas, to no avail! The further we get into 2024, the more it becomes "1984"! And we are like lambs to the slaughter. It's dangerous to be on the grid and almost impossible to avoid it!

As you have told us: Facebook doesn’t have security failure events; Facebook has Security Failure Timelines.